[This article is a follow-up on the theme of data structures started in my article on a Non-Binary Tree Data Structure.]

Clear API Design

When designing object models, there are often times when a Dictionary is the best choice for rapid lookup and access of items. However, when attempting to make those object models as intuitive and simple as possible, sometimes the fact that a dictionary is being used is an implementation detail and needn’t be exposed externally.

Take this model, for example:

class Database

{

public IEnumerable<Table> Tables;

public Database()

{

_Tables = new List<Table>();

}

}

class Table

{

public string TableName;

public Table(string TableName)

{

this.TableName = TableName;

}

}

This is nice and simple. I can loop through the Tables collection like this:

Database db = new Database();

foreach (var table in db.Tables)

{

Console.WriteLine(table.TableName);

}

So it's too bad that the consumer end of this has to change when we use a dictionary. By making a change to our Database type...

class Database

{

public Dictionary<string, Table> Tables;

public Database()

{

Tables = new Dictionary<string, Table>();

}

}

... we now have to specify the "Values" collection to get an IEnumerable<T> of the correct type.

foreach (var table in db.Tables.Values)

{

Console.WriteLine(table.TableName);

}

The consumers of our API may not care about iterating through key-value pairs, but now they have to remember to use this Values property or face the wrath of red squiggles and compiler errors when they forget. Of course, there’s a pattern we could use to hide this dictionary inner goo from the outside.

class Database

{

public IEnumerable<Table> Tables { get { return _Tables.Values; } }

private Dictionary<string, Table> _Tables { get; set; }

public Database()

{

_Tables = new Dictionary<string, Table>();

}

}

Now from the outside, we can foreach over db.Tables, but inside we can use the Dictionary for fast access to elements by key.

The Need for a Dictionary-List Hybrid

This is an either-or approach: that is, it assumes that the API consumer is better off with an IEnumerable collection and won’t have any need for keyed access to data (or even adding data, in this case). How can we have the best of both words, with the ability to write this kind of code?

Database db = new Database();

foreach (var table in db.Tables)

{

Console.WriteLine(table.TableName);

}

Table t = db.Tables["Customers"];

This is a hybrid of a Dictionary and a List. (Don’t confuse this with a HybridDictionary, which is purely a dictionary with runtime-adapting storage strategies.) It provides an IEnumerable<T> enumerator (instead of IEnumerable<K, T>), as well as an indexer for convenient lookup by key.

There’s another aspect of working with dictionaries that has always bugged me:

db.Tables.Add("Vendors", new Table("Vendors"));

This is repetitive, plus it says the same thing twice. What if I misspell my key in one of these two places? What I’d really like is to tell my collection which property of the Table class to use, and have it fill in the key for me. How can I do that? Well, I know I can select a property value concisely (in a compiler-checked and refactoring-friendly way) with a lambda expression. So perhaps I can supply that expression in the collection’s constructor. I decided to call my new collection KeyedList<K, T>, which inherits from Dictionary so I don’t have to do all the heavy lifting. Here’s how construction looks:

Tables = new KeyedList<string, Table>(t => t.TableName);

Now I can add Table objects to my collection, and the collection will use my lambda expression to fill in the key for me.

Tables.Add(new Table("Vendors"));

How does this work, exactly? Here's a first cut at our KeyedList class:

public class KeyedList<K, T> : Dictionary<K, T>, IEnumerable<T>

{

private Func<T, K> LinkedValue;

public KeyedList()

{

LinkedValue = null;

}

public KeyedList(Func<T, K> LinkedValue)

{

this.LinkedValue = LinkedValue;

}

public void Add(T item)

{

if (LinkedValue == null)

throw new InvalidOperationException("Can't call KeyedList<K, T>.Add(T) " +

"unless a LinkedValue function has been assigned");

Add(LinkedValue(item), item);

}

public new IEnumerator<T> GetEnumerator()

{

foreach (var item in Values)

{

yield return item;

}

yield break;

}

}

This is still pretty simple, but I can think of one thing that it’s missing (aside from a more complete IList<T> implementation). With a collection class like this, with tightly-integrated knowledge about the relationship between the key property of an item and the key in the Dictionary, what happens when we change that key property in the item? Suddenly it doesn’t match the dictionary key, and we have to remember to update this in an explicit separate step in our code whenever this happens. It seems that this is a great opportunity to forget something and introduce a bug into our code. How could our KeyedCollection class track and update this for us?

Unfortunately, there’s no perfect solution. “Data binding” in .NET is weak in my opinion, and requires implementation of INotifyPropertyChanged in our classes to participate; and when it does so, we only get notification of the property name that changed (supplied as a string), and have no idea what the old value was unless we store that somewhere ourselves. Automatically injecting all classes with data binding code isn’t practical, of course, even using AOP (since many BCL classes, for example, reside in signed assemblies). Hopefully a future CLR will be able to perform some tricks, such as intelligently and dynamically modifing those classes, for which other class’s data binding code specify interest, so we can have effortless and universal data binding.

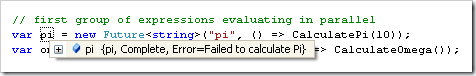

Now back to reality. I want to mention that although my code typically works just as well in Compact Framework as it does in Full Framework, I’m going off the reservation here. I’m going to be using expression trees, which are not supported in Compact Framework at all.

Expressions

The Expression class (in System.Linq.Expressions) is really neat. With it, you can wrap a delegate type to create an expression tree, which you can explore and modify, and at some point even compile into a function which you can invoke. The best part is that lambda expressions can be assigned to Expression types in the same way that they can be assigned to normal delegates.

Func<int> func = () => 5;

Expression<Func<int>> expr = () => 5;

The first line defines a function that returns an int, and a function is supplied as a lambda that returns the constant 5. The second line defines an expression tree of a function that returns an int. This extra level of indirection allows us to take a step back and look at the structure of the function itself in a precompiled state. The structure is a tree, which can be arbitrarily complex. You can think of this as a way of modeling the expression in a data structure. While func can be executed immediately, expr requires that we compile it by calling the Compile method (which generates IL for the method and returns Func<int>).

int FuncResult1 = func.Invoke();

int FuncResult2 = func();

int ExprResult1 = expr.Compile().Invoke();

int ExprResult2 = expr.Compile()();

The first two lines are equivalent, as are the last two. I just wanted to point out here, with the two ways of calling the functions, how they are in fact the same, even though the last line looks funky.

Synchronizing Item & Dictionary Keys

So why do we need expressions? Because we need to know the name of the property we’ve supplied in our KeyedList constructor. You can’t extract that information out of a function (supplied as a lambda expression or otherwise). But expressions contain all the metadata we need. Note that for this synchronization to work, it requires that the items in our collection implement INotifyPropertyChanged.

class Table : INotifyPropertyChanged

{

public event PropertyChangedEventHandler PropertyChanged;

private string _TableName;

public string TableName

{

get { return _TableName; }

set

{

_TableName = value;

if (PropertyChanged != null)

PropertyChanged(this, new PropertyChangedEventArgs("TableName"));

}

}

public Table(string TableName)

{

this.TableName = TableName;

}

}

This is tedious work, and though there are some patterns and code snippets I use to ease the burden a little, it’s still a lot of work to go through to implement such a primitive ability as data binding.

In order to get at the expression metadata, we’ll have to update our constructor to ask for an expression:

public KeyedList(Expression<Func<T, K>> LinkedValueExpression)

{

this.LinkedValueExpression = LinkedValueExpression;

}

We’ll also need to define a field to store this, and a property will help to compile it for us.

private Func<T, K> LinkedValue;

private Expression<Func<T, K>> _LinkedValueExpression;

public Expression<Func<T, K>> LinkedValueExpression

{

get { return _LinkedValueExpression; }

set

{

_LinkedValueExpression = value;

LinkedValue = (value == null) ? null : _LinkedValueExpression.Compile();

}

}

Now that the groundwork has been set, we can hook into the PropertyChanged event if it’s implemented, which we do by shadowing the Add method.

public new void Add(K key, T item)

{

base.Add(key, item);

if (typeof(INotifyPropertyChanged).IsAssignableFrom(typeof(T)))

(this[key] as INotifyPropertyChanged).PropertyChanged += new PropertyChangedEventHandler(KeyList_PropertyChanged);

}

One caveat about this approach: our shadowing method Add will unfortunately not be called if accessed through a variable of the base class. That is, if you assign a KeyedList object to a Dictionary object, and call Add from that Dictionary variable, the Dictionary.Add method will be called and not KeyedList.Add, so synchronization of keys will not work properly in that case. It’s extremely rare that you’d do such a thing, but I want to point it out regardless. As inheritor of a base class, I would prefer the derived class be in fuller control of these behaviors, but we work with what we have. I’ll actually take advantage of this later on in a helper method.

Finally, the tricky part. We need to examine our lambda’s expression tree and extract the property or field name from it. We’ll compare that to the property name reported to us as changed. The comparison is actually done between two MemberInfo variables, which is possible because reflection ensures that only one MemberInfo object will exist for each member. The MemberExpression object, which iniherits from Expression, possesses a Member property, and the other we get from typeof(T).GetMember. Here’s what that looks like:

private void KeyList_PropertyChanged(object sender, PropertyChangedEventArgs e)

{

var lambda = LinkedValueExpression as LambdaExpression;

if (lambda == null)

return;

var expr = lambda.Body as MemberExpression;

if (expr == null)

return;

MemberInfo[] members = typeof(T).GetMember(e.PropertyName, MemberTypes.Property | MemberTypes.Field, BindingFlags.Instance | BindingFlags.Public);

if (members.Length == 0)

throw new ApplicationException("Field or property " + e.PropertyName + " not found in type " + typeof(T).FullName);

MemberInfo mi = members[0];

if (mi == expr.Member)

{

// we don't know what the old key was, so we have to find the object in the dictionary

// then remove it and re-add it

foreach (var kvp in KeyValuePairs)

{

if ((typeof(T).IsValueType && kvp.Value.Equals(sender)) || (kvp.Value as object) == (sender as object))

{

T item = this[kvp.Key];

Remove(kvp.Key);

Add(item);

return;

}

}

}

}

This code makes an important assumption; namely, that a lambda expression will be used, which will contain a single field or property access. It does not support composite or calculated keys, such as (t.SchemaName + “.” + t.TableName), though it’s possible. I’m currently working on a method that recursively explores an Expression tree and checks for member access anywhere in the tree, to support scenarios like this. For now, and for the purpose of this article, we’ll stick to the simple case of single member access.

I found that having access to the list of KeyValuePairs was actually useful in my code, and to keep the PropertyChanged handler concise, I added a new KeyValuePairs property to expose the base Dictionary’s enumerator, which you can find in the complete listing of the KeyedList class toward the end of this article. I now have two iterators; and the way I’ve flipped it around, the default iterator of the base class has become a secondary, named iterator of KeyedList.

Here is a test program to demonstrate the functionality and flexibility of the KeyedList class.

class Program

{

static void Main(string[] args)

{

// use a lambda expression to select the member of Table to use as the dictionary key

var Tables = new KeyedList<string, Table>(t => t.TableName);

// add a Table object to our KeyList like an old-fashioned Dictionary

Tables.Add("Customers", new Table("Customers"));

// add a Table object to our KeyList without explicitly specifying a key

Tables.Add(new Table("Vendors"));

// prove that a change in an item's key property updates the corresponding dictionary key

Table Vendors = Tables["Vendors"];

Vendors.TableName = "VENDORS";

Console.WriteLine("Is 'Vendors' a valid dictionary key? " + Tables.ContainsKey("Vendors"));

Console.WriteLine("Is 'VENDORS' a valid dictionary key? " + Tables.ContainsKey("VENDORS"));

// prove that the IEnumerable<T> iterator works

// note that we don't have to loop through Tables.Values

foreach (var table in Tables)

{

Console.WriteLine(table.TableName);

}

Console.ReadKey();

}

}

Complete Source for KeyedList

For your convenience, here is the complete listing of KeyedList.

public class KeyedList<K, T> : Dictionary<K, T>, IEnumerable<T>

{

private Func<T, K> LinkedValue;

private Expression<Func<T, K>> _LinkedValueExpression;

public Expression<Func<T, K>> LinkedValueExpression

{

get { return _LinkedValueExpression; }

set

{

_LinkedValueExpression = value;

LinkedValue = (value == null) ? null : _LinkedValueExpression.Compile();

}

}

public KeyedList()

{

LinkedValueExpression = null;

}

public KeyedList(Expression<Func<T, K>> LinkedValueExpression)

{

this.LinkedValueExpression = LinkedValueExpression;

}

public void Add(T item)

{

if (LinkedValue == null)

throw new InvalidOperationException("Can't call KeyedList<K, T>.Add(T) " +

"unless a LinkedValue function has been assigned");

Add(LinkedValue(item), item);

}

public new void Add(K key, T item)

{

base.Add(key, item);

if (typeof(INotifyPropertyChanged).IsAssignableFrom(typeof(T)))

(this[key] as INotifyPropertyChanged).PropertyChanged += new PropertyChangedEventHandler(KeyList_PropertyChanged);

}

private void KeyList_PropertyChanged(object sender, PropertyChangedEventArgs e)

{

var lambda = LinkedValueExpression as LambdaExpression;

if (lambda == null)

return;

var expr = lambda.Body as MemberExpression;

if (expr == null)

return;

MemberInfo[] members = typeof(T).GetMember(e.PropertyName,

MemberTypes.Property | MemberTypes.Field,

BindingFlags.Instance | BindingFlags.Public);

if (members.Length == 0)

throw new ApplicationException("Field or property " + e.PropertyName + " not found in type " + typeof(T).FullName);

MemberInfo mi = members[0];

if (mi == expr.Member)

{

// we don't know what the old key was, so we have to find the object in the dictionary

// then remove it and re-add it

foreach (var kvp in KeyValuePairs)

{

if ((typeof(T).IsValueType && kvp.Value.Equals(sender))

|| (!typeof(T).IsValueType && (kvp.Value as object) == (sender as object)))

{

T item = this[kvp.Key];

Remove(kvp.Key);

Add(item);

return;

}

}

}

}

public new IEnumerator<T> GetEnumerator()

{

foreach (var item in Values)

{

yield return item;

}

yield break;

}

public IEnumerable<KeyValuePair<K, T>> KeyValuePairs

{

get

{

// because GetEnumerator is shadowed (to provide the more intuitive IEnumerable<T>),

// and foreach'ing over "base" isn't allowed,

// we use a Dictionary variable pointing to "this"

// so we can use its IEnumerable<KeyValuePair<K, T>>

Dictionary<K, T> dict = this;

foreach (var kvp in dict)

{

yield return kvp;

}

yield break;

}

}

}

Conclusion

As with the non-binary Tree data structure I created in this article, I prefer to work with more intelligent object containers that establish tighter integration between the container itself and the elements they contain. I believe this reduces mental friction, both for author and consumer of components (or the author and consumer roles when they are the same person), and allows a single generic data structure to be used where custom collection classes are normally defined. Additionally, by using data structures that expose more flexible surface areas, we can often reap the benefits of having powerful lookup features without locking ourselves out of the simple List facades that serve so well in our APIs.

The Achilles Heel of this solution is a weak and un-guaranteed data binding infrastructure combined with lack of support for Expressions in Compact Framework. In other words, items have to play along, and it’s not platform universal.

Clearly, more work needs to be done. Event handlers need to be unhooked when items are removed, optimizations could be done to speed up key synchronization, and more complex expressions could be supported without too much trouble. Ultimately what I’d like to see is a core collection class with the ability to add and access multiple indexes (such as with a database), instead of presuming that a Dictionary with its solitary key is all we can or should use. The hashed key of a Dictionary seems like a great adornment to an existing collection class, rather than a hard-coded stand-alone structure. But I think this is a good start toward addressing some of the fundamental shortcomings of these existing approaches, and hopefully demonstrating the value of more intelligent collection and container classes.

Other Implementations

I was at a loss for a while as to what to call this collection class; I considered DictionaryList (and ListDictionary), as well as HashedList, before arriving at KeyedList. To my amazement, I found several other implementations of the same kind of data structure with the same name, so it must be a good name. The implementations here and here are more complete than mine, but neither auto-assign keys with a key-selection function or update dictionary keys using data binding, which ultimately is what I’m emphasizing here. Hint: it wouldn’t be tough to combine what I have here with either of them.